CVPR 2024 (Highlight)

DiVa-360: The Dynamic Visual Dataset for Immersive Neural Fields

1Brown University

2CVIT, IIIT Hyderabad

3I3S-CNRS/Université Côte d’Azur

*[Equal Contribution]

Abstract

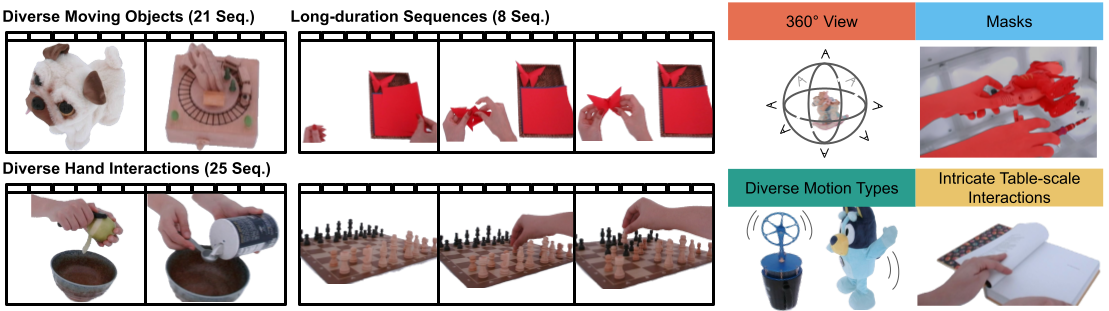

Advances in neural fields are enablling high-fidelity capture of shape and appearance of dynamic 3D scenes. However, this capbabilities lag behind those offered by conventional representations such as 2D videos because of algorithmic challenges and the lack of large-scale multi-view real-world datasets. We address the dataset limitations with DiVa-360, a real-world 360° dynamic visual dataset that contains synchronized high-resolution and long-duration multi-view video sequences of table-scale scenes captured using a customized low-cost system with 53 cameras. It contains 21 object-centric sequences categorized by different motion types, 25 intricate hand-object interaction sequences, and 8 long-duration sequences for a total of 17.4M frames. In addition, we provide foreground-background segmentation masks, synchronized audio, and text descriptions. We benchmark the state-of-the-art dynamic neural field methods on DiVa-360 and provide insights about existing methods and future challenges on long-duration neural field capture.

Downloading Data

You can download the data here. Please note that the dataset is large. Here is a breakdown of required storage:

- Raw Data: 1.4 TB

- Processed Data: 1.8 TB

- Trained Models: 6 TB

- Rendered Videos: 63.1 GB

Sequences

Here are a list of sequences

- battery

- blue_car

- bunny

- chess

- clock

- dog

- drum

- flip_book

- horse

- hour_glass

- jenga

- k1_double_punch

- k1_hand_stand

- k1_push_up

- keyboard_mouse

- kindle

- maracas

- music_box

- pan

- peel_apple

- penguin

- piano

- plasma_ball

- plasma_ball_clip

- poker

- pour_salt

- pour_tea

- put_candy

- put_fruit

- red_car

- scissor

- slice_apple

- soda

- stirling

- tambourine

- tea

- tornado

- trex

- truck

- unlock

- wall_e

- wolf

- world_globe

- writing_1

- writing_2

- xylophone

Here are a list of long sequences

- chess_long

- crochet

- jenga_long

- legos

- origami

- painting

- puzzle

- rubiks_cube

Dynamic Data Examples

Note: These videos have sound

Dynamic Baseline Examples

Object Centric Sequence

Note: This video has sound

Hand-Object Interaction Sequence

Note: This video has sound

Long Duration Sequence

Note: This video has sound

Citations

@inproceedings{diva360,

title={DiVa-360: The Dynamic Visual Dataset for Immersive Neural Fields},

author={Cheng-You Lu and Peisen Zhou and Angela Xing and Chandradeep Pokhariya and Arnab Dey and Ishaan N Shah and Rugved Mavidipalli and Dylan Hu and Andrew Comport and Kefan Chen and Srinath Sridhar},

booktitle = {Conference on Computer Vision and Pattern Recognition 2024},

year={2024}

}

Acknowledgements

This work was supported by NSF grants CAREER #2143576 and CNS #2038897, ONR grant N00014-22-1-259, ONR DURIP grant N00014-23-1-2804, a gift from Meta Reality Labs, an AWS Cloud Credits award, and NSF CloudBank. Arnab Dey was supported by H2020 COFUND program BoostUrCareer under Marie SklodowskaCurie grant agreement #847581. We thank George Konidaris, Stefanie Tellex, and Rohith Agaram.

Contact

Cheng-You Lu (cheng-you.lu@student.uts.edu.au)

Peisen Zhou (peisen_zhou@alumni.brown.edu)

Angela Xing (angela_xing@brown.edu)